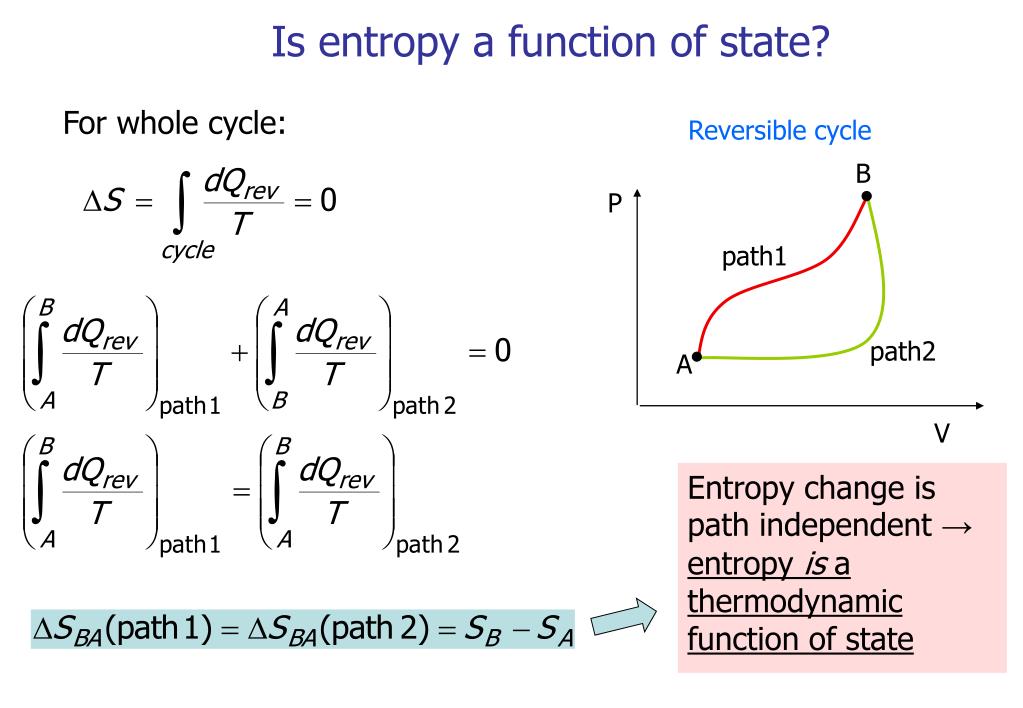

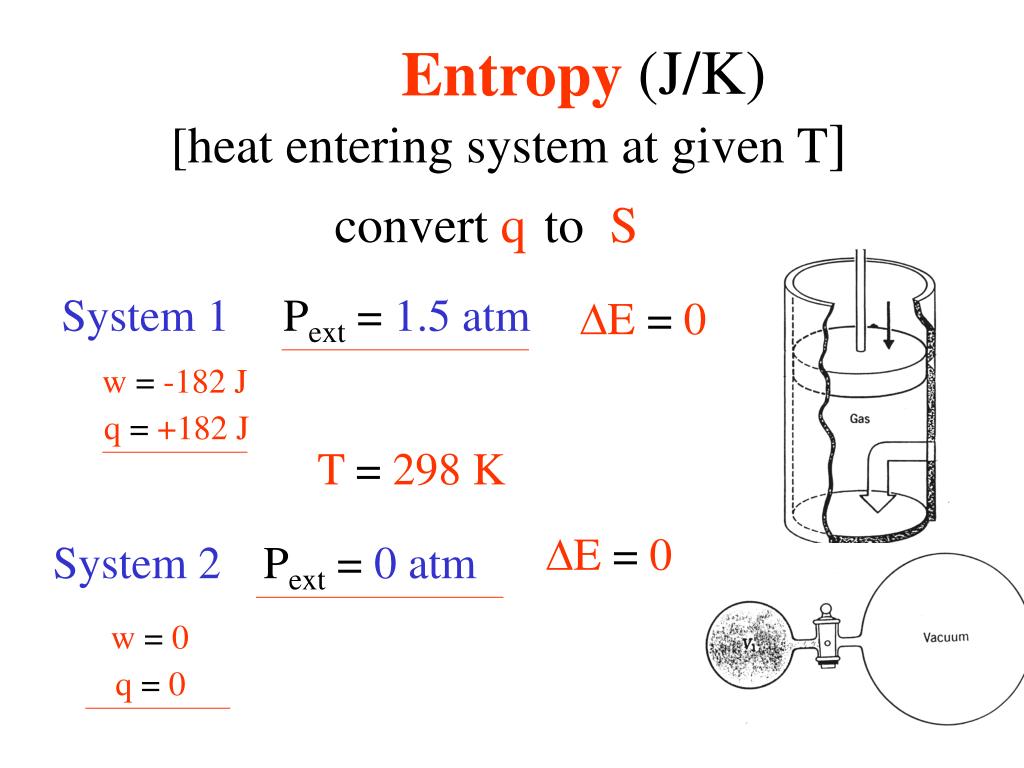

As an exact value of entropy is impossible to measure however, through relationships derived by both Josiah Willard Gibbs and James Clerk Maxwell the change in energy between one state and another can be calculated based on measurable functions, like temperature and pressure. Entropy is particularly important when describing how energy is used and transferred within a system. Entropy can also be described as thermal energy not able to do work since energy becomes more evenly distributed as the system becomes more disordered. If this is the case, it supports the Big Bang theory because the entropy value of zero can only be reached when the entire universe is a singularity.Entropy (S) is the thermodynamic measure of randomness throughout a system (also simplified as “disorder”). If entropy has been steadily increasing, there must have been a point in the past when it was zero, because negative entropy makes no sense. Σ ∆S reactants – refers to the sum of the ∆S reactantsģ) Is it possible to find ∆S using the Gibbs free energy ( ∆ G) and the enthalpy ( ∆ H): Σ ∆S products = refers to the sum of the ∆S products ∆S rxn – refers to the standard entropy values If the process is taking place at a constant temperature, entropy will be constant.Ģ) Furthermore, assuming the process reaction is known, we can use a table of conventional entropy values to find ∆S rxn.įurthermore, there are numerous equations for calculating entropy: It also takes into account the system’s entropy as well as the entropy of the environment. Furthermore, scientists have found that the entropy of a spontaneous process must rise. Furthermore, the entropy of a solid (closely packed particles) is higher than that of a gas (particles are free to move). Entropy is a scalable property, meaning it grows in proportion to the size or scope of a system.Įntropy is a thermodynamic function that is used to quantify a system’s uncertainty or disorder.It is represented by S, however it is represented as S° in the ordinary state.The condition of the system, rather than the path taken, determines it. On the other hand, we have energy conservation:ĪS the work is: δW = – P dV, Hence we get the relation between heat and entropy:įlux of heat increases its entropy when it is inside the system. If the number of particles in a particular process is limited (dN = 0), then: On this scale, zero is the lowest temperature that any substance may theoretically reach. In this equation, the temperature must be measured on the absolute, or Kelvin, scale. We consider something to be more entropic if it is more chaotic. Energy dissipates, and systems disintegrate. If problem is not addressed, it will worsen over time. In fact, it’s akin to a tax imposed by nature. Įntropy is a measure of chaos that has an impact on many facets of our existence. The orbiting of the planets around the sun, for example, can be considered essentially reversible: It does not appear that a reversed movie of the planets orbiting the sun is impossible. Some natural processes are almost reversible. These are irreversible processes: A glass of cool water will not spontaneously form an ice cube in a glass of warm water. A glass of warm water with an ice cube in it, for example, will have a lower entropy than the same system after the ice has melted and left a glass of cool water. When bodies of matter or radiation, which are each in their own state of internal thermodynamic equilibrium, are brought together in such a way that they interact intimately and reach a new joint equilibrium, their total entropy rises. For such a body, thermodynamic entropy has a fixed value and is at its maximum value. A body of matter and radiation will eventually reach an unchanging state with no discernible flows, which is known as thermodynamic equilibrium. Entropy is a measure of uncertainty or unpredictability in this sense. Entropy cannot continue to grow endlessly. Entropy is also a metric for the amount of alternative configurations for atoms in a system. The quantity of energy that is unavailable to conduct work is measured by an object’s entropy. The dispersion of energy or matter, or the amount and diversity of microscopic motion, is a more physical explanation of thermodynamic entropy. The term ‘entropy’ means a lack of order or predictability, as well as a steady descent towards chaos.

For example, you can pour cream into coffee and mix it, but you can’t “unmix” it, just as you can’t “unburn” a piece of wood. Entropy is a numerical term in thermodynamics that illustrates that many physical processes can only go in one way in time.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed